Operating Systems

Curriculum[edit]

| Coder Merlin™ Computer Science Curriculum Data | |

|

Unit: Experience Name: Operating Systems () Next Experience: () Knowledge and skills: noneSome use of "" in your query was not closed by a matching "". Topic areas: none Classroom time (average): Study time (average): Successful completion requires knowledge: none Successful completion requires skills: none |

Background[edit]

- Read File systems and operating systems on Wikipedia

Introduction[edit]

As the name suggests, an Operating System handles all the operations of a computer system. With ever-increasing quantities of hardware in the world paired with distinct mechanisms to control each of them, it became a necessity for computer users to have a middleman that knows how to deal with the hardware, including peripherals, and present them with a simple interface to interact with the computer. Hence, our good programmers created the Operating System which acts as a universal program that takes care of all the ones and zeros of a computer and its components.

Where The OF Stands[edit]

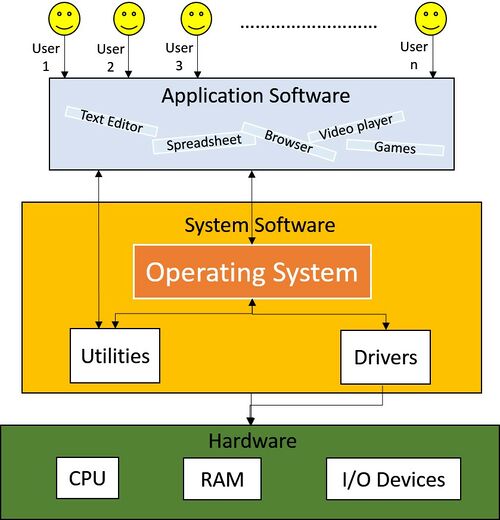

Take a look at this graphic that shows the architecture of a computer system:

We can see that the Operating System sits in the middle, acting as the sole mediator between the human operator and the computer's hardware.

The following sections will examine how an Operating System performs these tasks for us.

Program Execution[edit]

First off, let's observe a step-by-step explanation of how an Operating System helps a program execute:

- Firstly, the user launches the program.

This could be a double-click from inside a file explorer GUI (Graphical User Interface) or a direct command issued from a CLI (Command-line Interface) ordering a program to launch. - Secondly, upon receiving this launch command, the Operating System allocates a physical memory (RAM) block dedicated to the program.

- Thirdly, the OS loads any program code currently saved in the secondary memory (e.g. HDD/SSD) onto the RAM block.

- Finally, the Operating System instructs the CPU to run the program.

Helpful Hint

Helpful Hint

But this doesn't necessarily mean the CPU will run it, per se. The CPU might run the program straight away, or it might not. This is simply because of the different states a process passes through as it executes.

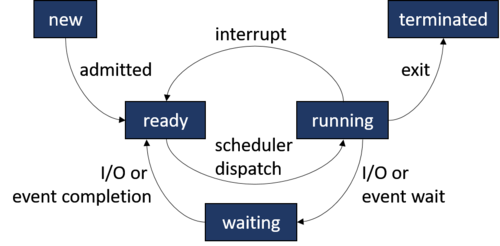

Let's take a look at it in the next section.Process Execution States[edit]

An Operating System can make a process traverse through many possible states:

At any given time, a started process can be in any one of the following states:

- new - The process is getting created.

- ready - The process is ready to start running and awaiting instructions from the CPU.

- running - The process is running. The CPU is executing instructions given by the process.

- waiting - The process is paused and waiting for an event to occur, like keyboard input.

- terminated - The process has completed.

Process Scheduling[edit]

As we saw in the previous topic, a process waits for CPU instructions to move from the ready state to running. It's the duty of the Operating System to analyze and determine which processes to move to the running state and which processes should be kept in the ready state. This function of the Operating System is called Process Scheduling. The Operating System tries to have the CPU busy and executing processes while also ensuring each program receives the shortest response time possible.

Scheduling Categories[edit]

Process scheduling can be categorized based on how the currently running process is chosen:

- Preemptive Scheduling

CPU time is divided into time slots and the processes are given a slot to switch to the running state and execute. If a process couldn't complete its execution within the time slot given, it is moved to the ready state until it's called back. - Non-preemptive Scheduling

A process is kept in the running state until it finishes its execution or moves to a waiting state (e.g. waiting for I/O event completion). In the case of the latter, another process will be given a chance to execute.

Memory Management[edit]

The Operating System is responsible for managing the physical memory (RAM) and secondary memory (e.g. HDD/SSD) of a computer. When a program is running, the Operating System loads required program data currently stored in the secondary memory onto memory blocks of the main memory.

Helpful Hint

Helpful Hint

The OS keeps track of memory locations currently allocated to a program, and those that are free. Based on the currently running processes requesting memory, the Operating System analyzes and determines how much memory each of these processes can receive and when it can expect the memory to be allocated.

Virtual Memory Allocation[edit]

Operating Systems follow a concept called virtual memory allocation to avoid non-sequential blocks of memory being allocated to programs.

Let's assume a program foo was started and the Operating System allocated a memory block containing addresses 0 through 999 for this program. Later another program bar was started and it was allocated addresses 1000 through 1999.

| Physical Memory Address | Allocated Program |

|---|---|

| 0-999 | foo |

| 1000-1999 | bar |

| 2000-2999 | - |

Afterward, foo was out of memory and requested more from the Operating System. But since the sequential memory addresses were already allocated by bar, the OS could only grant the next memory block of addresses 2000-2999.

| Physical Memory Address | Allocated Program |

|---|---|

| 0-999 | foo |

| 1000-1999 | bar |

| 2000-2999 | foo |

| 3000-3999 | - |

As you can see, this introduced a discontinuous order for the physical addresses allocated to foo. And this was for an extremely simple scenario; imagine dozens of programs and background services running at the same time and their memory locations being scattered around leading to untraceable complexity.

Virtual Memory was introduced to overcome this issue. When an Operating System is using virtual memory, the programs can think they're being allocated consistent memory addresses starting from 0 and going forth. But behind the scenes, the OS might be allocating physical addresses from all over the place. This is achieved through a mapping between physical and virtual memory maintained by the Operating System.

Take a look at our example that uses virtual memory this time around:

| Physical Memory Address | Virtual Memory Address | Allocated Program |

|---|---|---|

| 0-999 | 0-999 | foo |

| 1000-1999 | 0-999 | bar |

| 2000-2999 | 1000-1999 | foo |

| 3000-3999 | - | - |

As you can see, foo has been allocated a memory block containing addresses 0 through 1999, although it is virtual memory. Yes, the physical addresses might be scattered but as far as foo sees, it's been allocated a sequential block of memory to operate on.

File Access[edit]

These days, computers stores hundreds if not thousands of files in their secondary memory. Hence, a few file access mechanisms were introduced for Operating Systems to adapt and swiftly handle I/O operations on their disk storage. Currently used File Access methods are as follows:

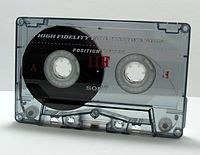

- Sequential Access

In this mechanism, as the name suggests, the file is accessed in a preset sequential order. This is the most basic and widely used method of the bunch.

Helpful Hint

Helpful Hint

- Random Access

As opposed to sequential access, the random access method opts to access file records in a direct manner. This method directly places the file pointer on the required memory addresses and performs the required I/O operations.

Helpful Hint

Helpful Hint

This is an instance where Random Access to files becomes useful.

- Indexed Sequential Access

Think of this method simply as a Sequential Access method performed on indexed files. When using this method, the Operating System pre-indexes the file with unique keys given to each record. Whenever the Indexed Sequential Access Method wants to perform I/O operations on a record, it locates the required record with help of indexes.

Loader[edit]

Programs and libraries must be loaded to the Operating System before they can be executed. This is performed by a component in the OS called a loader. The usual flow of a loader is like this:

- Extract the program/library instructions from its executable file in the secondary memory into RAM.

- Perform any additional tasks required to prepare the program/library to run.

- Pass control over to the program/library to execute its instructions.

Interrupt Management[edit]

When a hardware device or a software program needs urgent attention from the OS, for example, if a printer has run out of paper, or a user has clicked a button inside a program, it invokes an interrupt. If a single interrupt occurred, the Operating System will give it the highest priority and allocate it with CPU time to be executed straight away.

But what if multiple interrupts occurred?

In that case, the interrupts will be placed on a queue and executed in sequential order.

Multitasking and Multiprogramming[edit]

Let's say you need to write a text document. So, you opened up a word processor application. But, you also needed to browse the internet to research content to write, so you opened up a web browser as well. You wanted to listen to some music as well while you're working. So you opened up a music player on your computer and put it on play. Now there are three applications open on your computer. But, will your PC complain that it can run only a single application at a time?

Helpful Hint

Helpful Hint

This is achieved with the help of multitasking. Multitasking enables an Operating System to switch its CPU allocation between multiple tasks. This CPU allocation switching happens so rapidly that the user is led to believe all the tasks are running simultaneously. This is in contrast to the single-tasking systems from a previous era when a computer could only run a single task at a time.

This act or concept of running multiple programs at once is called multiprogramming.

Time Sharing[edit]

Think of time sharing as the same principle of multitasking placed on multiple real-world users instead of tasks. In other words, when an OS shares the CPU and resource time of a computer between multiple users, effectively allowing several users to concurrently use the computer at the same time, it is called time sharing.

Single-user vs Multi-user Systems[edit]

A single-user Operating System might be a multiprogramming OS allowing multiple programs to run simultaneously, but it's unable to distinguish between multiple users. On the contrary, a multi-user Operating System allows multiple users to operate a computer at the same time.

A multi-user system is aware of multiple users accessing it and hence, all time sharing systems are inadvertently multi-user systems as well.

Input/Output Management[edit]

As we learned from our elementary school days, the three basic operations of a computer can be defined as:

As a deduction, the management of Input devices (e.g., keyboard, mouse) and Output devices (e.g., monitor, printer) of a computer can be seen as a crucial role of the Operating System.

Device Driver[edit]

A device driver is simply a program provided by the device manufacturer to help an Operating System talk to it. It holds specific commands and instructions required to communicate with the device.

Device Controller[edit]

In contrast to a device driver, a device controller acts as a common interface for handling a specific type of device. These are hardware components either built-in to the motherboard or separately plugged-in to it. For instance, a USB controller reads signals coming in and out of a USB device and communicates them to the appropriate USB driver in the OS. In other words, they act as an intermediary between a physical hardware device and its device driver.

Spooling[edit]

Spooling is used to avoid slow I/O operations from bottlenecking the Operating System. For instance, assume you need to print a document using a printer. When you give the print command to the Operating System using a text editor, it will send all print instructions to the printer. But as you know, a printer usually takes its time to print it out. Do you want the whole Operating System to freeze and wait for the printing to finish before returning command to you? Probably not. With spooling, the OS runs a spooler background process that queues up each print request as they come. The spooler waits till each printing is executed by the printer, retrieves the next document from the spool, and provides it to the printer. This helps the Operating System continue with other functions while the spooler takes care of executing the I/O operations.

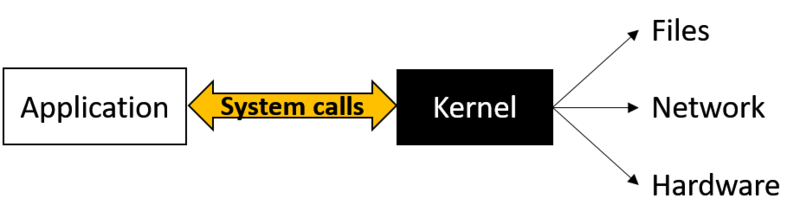

System Calls[edit]

When an application executes, it might require access to various services such as:

- Hardware resources (e.g., create a network connection, access files on secondary memory)

- Kernel services (e.g., execute a new process, request additional memory allocation)

Operating Systems do not provide direct access to these for applications. Instead, the application must send a System Call over to the OS kernel, while the kernel analyzes and decides whether to grant the request or not.

User Interaction[edit]

In terms of User Interaction, Operating Systems have come a long way from the non-interactive batch processing systems used in the early days. Nowadays users interact with Operating Systems using a couple of methods:

- Command Line Interface (CLI)

This is a text-based interface where the user can give commands using an input method, such as a keyboard, to command the OS to run processes and scripts. These commands can involve anything from traversing through the file hierarchy, performing read and write operations, executing I/O operations, etc.

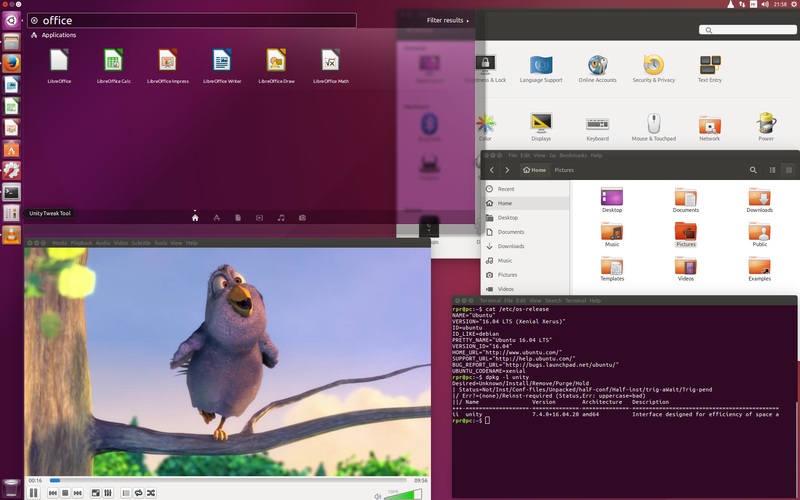

- Graphical User Interface (GUI)

This is a graphical interface created to overcome the restrictions of a CLI. The user is presented with an interface containing windows, icons, menus, etc. Users can interact with the GUI using a plethora of input devices like a mouse, keyboard, joystick, microphone, or camera just to name a few. A GUI provides a more user-friendly interaction with the OS.

Benefits of a CLI[edit]

While the innovation of the GUI provides a friendlier interface than the CLI, the CLI still holds ground as a useful interface thanks to some benefits such as:

- Scripting

Unlike a GUI, a CLI allows for far more complex scripts to be executed with pinpoint accuracy. - Pipes (|)

A CLI user can use pipes to call commands in a sequentially joined method. That is to say, when multiple commands are joined with a pipe, each output from the former command will act as input for the latter command. - Redirection (> and >>)

Quite like an extension to the function of pipes, redirection allows the CLI user to read/write from files on the former command and use it as input for the latter command. - Easily Automate Complex Tasks

CLI scripts can be used to keep lengthy sets of commands to be easily reused by multiple CLI users.

Common Operating Systems[edit]

Let's go through a comparison between the main Operating Systems:

| Compare on | Windows | macOS | Linux |

|---|---|---|---|

| Hardware | Thanks to having the largest customer base, Windows offers compatibility through a wide range of 3rd party peripherals. | Although macOS also allows for third-party drivers, many devices opt to only provide official drivers for Windows because of its larger user base. | Similar to macOS, although Linux allows for third-party drivers, many device manufacturers choose to focus their efforts on developing drivers for Windows and macOS instead of Linux. |

| Software | Microsoft offers a growing set of products and services in addition to its Windows Operating System line, such as Microsoft Office suite, OneDrive, Azure, etc. And owing to its market share, Windows offers the highest compatibility with 3rd party software in the market. | macOS on the other hand hosts a set of inbuilt software as well, such as iTunes, Safari, Apple Music, etc. macOS also supports a range of 3rd party software available through their App Store and the internet. But compared to Windows, macOS tends to have a smaller collection of software. | Due to its smaller user base Linux has less applications developed for it. However, many popular programs such as Google Chrome and a growing library of video games do offer Linux versions. Additionally, tools exist for Linux such as WINE (which also supports macOS) to run Windows software without emulating Windows. |

| Security | Being the most widely used OS from the bunch, Windows customarily attract higher numbers of hackers. Hence, Windows tends to face more security attacks through various types of malware. Windows has a built-in antivirus software named Windows Defender and many other tried-and-tested Antivirus software in the market to fight off these threats. | In contrast to Windows, macOS can be called a safer option thanks to it being a comparatively minor target of attackers. macOS has a built-in antivirus named Gatekeeper to shield itself from any attacks. | Thanks to being a smaller target to hackers because of its smaller user base, and its open-source nature attracting a community to keep it maintained and safe from security issues, Linux is often regarded to be the safest OS of the three platforms. |

Key Concepts[edit]

- The Operating System acts as the sole mediator between the humans and the computer's hardware.

- The OS makes a process traverse through many states. At any given time a started process could be in any one of these states: new, ready, running, waiting, terminated.

- Process Scheduling is when the OS analyzes and determines which processes to be moved to running state and which of them to be kept in the ready state.

- Process scheduling can be categorized as preemptive or non-preemptive scheduling based on how the currently running process is chosen.

- The OS keeps track of whether memory locations are currently allocated or free. Then it determines how much and when to allocate free memory to programs.

- Virtual Memory allocation avoids non-sequential blocks of memory being allocated to programs.

- Files on Operating Systems are accessed using Sequential Access, Random Access, or Indexed Sequential Access methods.

- Programs and libraries should be loaded to the Operating System using loaders before they can be executed.

- An interrupt is invoked when a hardware device or a software program needs urgent attention from the OS.

- Multitasking enables an Operating System to switch its CPU allocation between multiple tasks.

- Time Sharing is when an OS shares the CPU and resource time of a computer between multiple users, effectively allowing several users to concurrently use the computer's resources.

- Input/Output management within the OS is done through the use of device drivers, device controllers, and spooling.

- Applications gain access to hardware resources and kernel services using System Calls.

- Users interact with the Operating System through a Command Line Interface(CLI) or a Graphical User Interface(GUI).

Quiz[edit]